Wikipedia's traffic drop: more on languages and freshness

Second quick post on Wikipedia’s pageview decline. The first post looked at ~4,000 career articles on English Wikipedia. This one widens the lens to 5,000 articles across eight major-language Wikipedias and asks a sharper question: which topics are dropping, and is the pattern the same everywhere?

#Disclaimer the first

Page views are not the only metric for Wikipedia’s impact. So take this with a grain of salt, and think about other ways we have impact (including through serving as a knowledge base for LLMs). Still, it is one important channel by which we fulfill our mission and live our values so I think it’s worth exploring even as we know it isn’t the end-all and be-all.

#Disclaimer the second

I’m trying very hard not to draw conclusions. It’s obvious that part of the story is LLMs, and probably the biggest part. But it is also very much a story about social media, and apps, and Google SEO, and and and. If you have questions, find me on social; I’ll try to answer or dig more into the data if I can.

#TL;DR

Since 2016–2019, aggregate monthly pageviews of Wikipedia’s “Vital Articles” are down −26% across eight major languages I sampled (en, es, fr, de, it, pt, ja, ar). The Vital Articles are an imperfect set, but they cover a much broader set of topics than my last sample set, and are widely replicated across wikis. (All of these wikis have at least 80% of the articles, making it more apples-to-apples.)

The decline isn’t even across topics. Mathematics, physical sciences, and technology are down 43% to 85%; biographical articles and geography are down less than 10% in half the languages I looked at. The per-topic ordering (which have declined the most or the least) is nearly identical in every one of the eight languages.

Freshness of article content matters, but not as strongly as topic.

#What I did (briefly)

With help from Claude Code, pulled Wikipedia’s on-wiki Level-5 Vital Articles list — 39,707 articles editorially curated into 11 topic buckets (Arts, Biology, Everyday life, Geography, History, Mathematics, People, Philosophy and religion, Physical sciences, Society, Technology). Sampled 5,000 of them with stratification across buckets. For each article, fetched monthly pageviews 2016-01 through 2026-03 in every major language where a sitelink existed. In the twelve languages where at least 80% of the articles existed, I compared a 2016–2019 pre-LLM baseline window against a 2025-04..2026-03 recent window. I fetched 12 languages, but four of them have major confounders (combinations of network access, embargo, and war, in zh, ru, fa, and uk) so for now I’ve left them out of the analysis.

Full code and pipeline in the open-source repo under analysis/vital-articles/.

#Confirming: decline is widespread

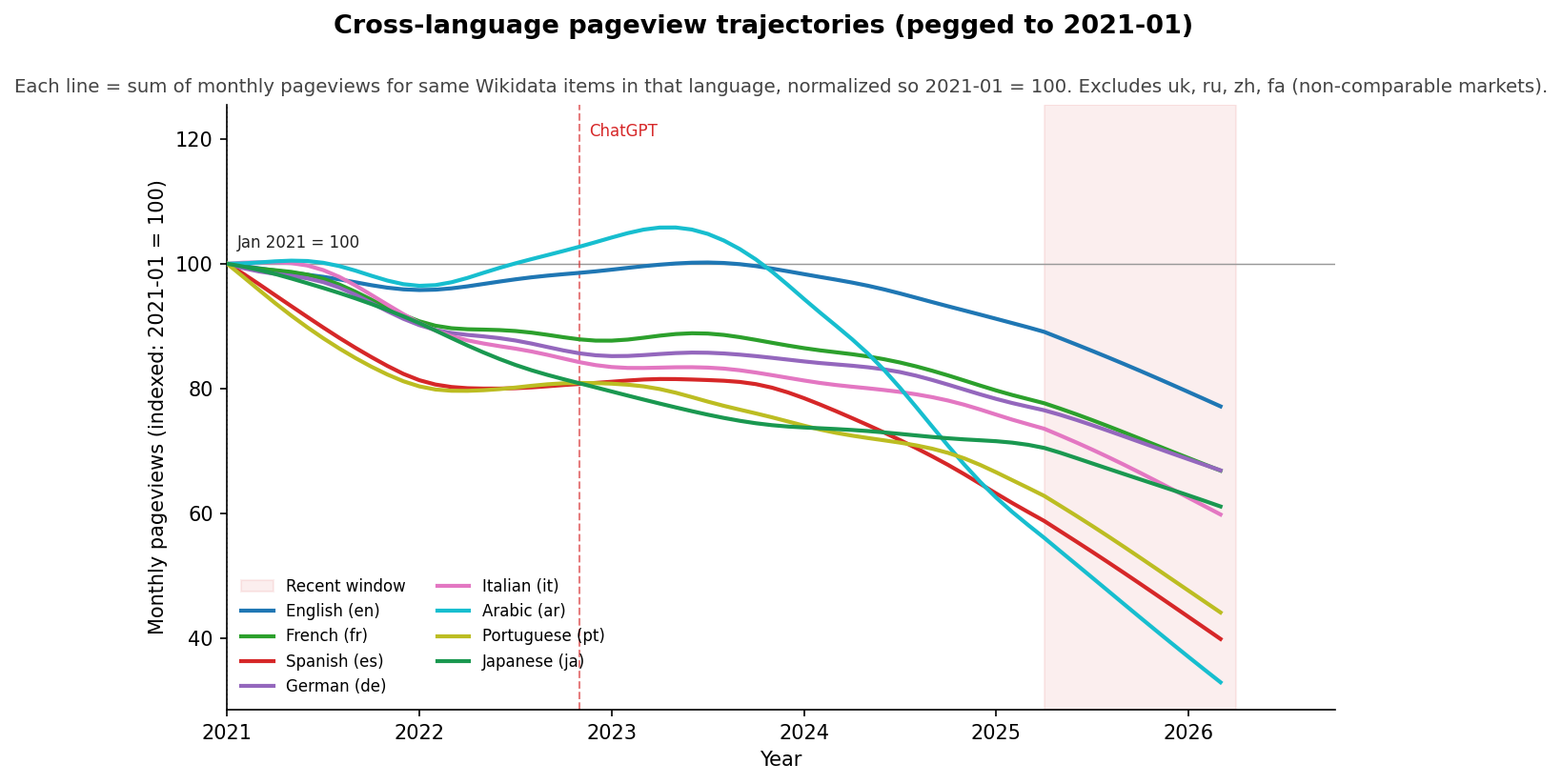

The obvious question after my last post was “what about languages outside English?” Here are monthly pageview trajectories for the 8 non-embargo languages, all pegged so that each language’s January 2021 pageview level = 100.

January 2021 is a reasonable anchor because it’s past the immediate COVID spike of 2020 but before any plausible LLM effect—ChatGPT launched in November 2022. So this chart is asking “compared to where each Wikipedia was in early 2021, where is it now?”

English is losing the least, which is probably the opposite of what a naive “more LLM exposure → more decline” story would predict. English has huge ChatGPT+Claude adoption, and the best models are tested and developed in English. But en.wikipedia holds up better than any other language in my sample. I can see any number of hypotheses for why this is, but not sure how to test any of them. Spanish and Portuguese are losing the most, both in the 50%+ range.

#Decline is topic-specific

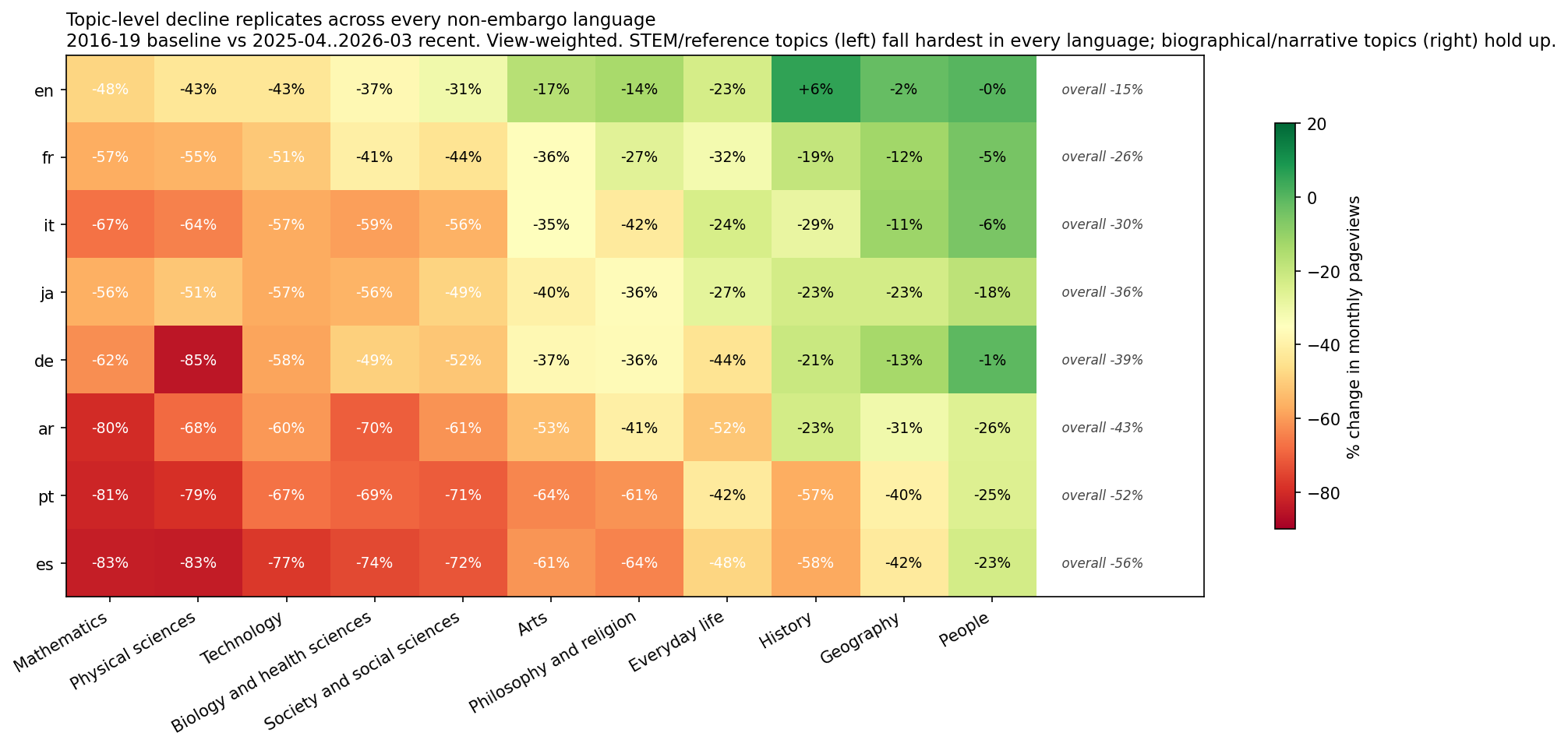

So what about by topic? This is view-weighted % change from the 2016–19 baseline to the most recent 12 months, one number per (language × topic) cell.

Rows are sorted by each language’s overall decline (English at top, Spanish at bottom). Columns are sorted by the topic’s mean decline across all eight languages (worst on the left, best on the right).

Read it column by column, and note that every column is basically uniform: Mathematics and Physical sciences are declining heavily in all languages. At the other end, People is holding up pretty well everywhere, same for Geography and History.

If topic behavior varied by language, you’d see scattered speckle across the grid — some languages losing their Biology articles, others their Arts articles, others their People articles. Instead you see clean vertical bands. So an important takeaway, though I don’t know why: the per-topic ordering of decline is essentially the same in every language.

#Details: what is collapsing; what is holding up

Worst-declining topics (top-3 in every single language):

- Mathematics: between −48% (en) and −83% (es). Mean across the eight languages: −67%.

- Physical sciences: between −43% (en) and −85% (de). Mean: −66%.

- Technology: between −43% (en) and −77% (es). Mean: −59%.

Best-holding topics (bottom-3 in every language):

- People: between −0.1% (en) and −25.8% (ar). Mean: −13%. In English, German, and French, this bucket is essentially flat. In Italian it’s −5.6%.

- Geography: between −2% (en) and −42% (es). Mean: −22%.

- History: between +5.5% (en, actually grew) and −58% (es). Mean: −28%.

The middle tier — Biology, Society and social sciences, Arts, Philosophy and religion, Everyday life — falls between those two groups.

#Does article maintenance matter?

I was asked by an interested Wikipedian to look harder at article recency. He told me that one theory in the Spanish wiki community is that their articles are not well-updated, and therefore penalized in Google and falling faster than English, and asked me to see what I could puzzle out from that.

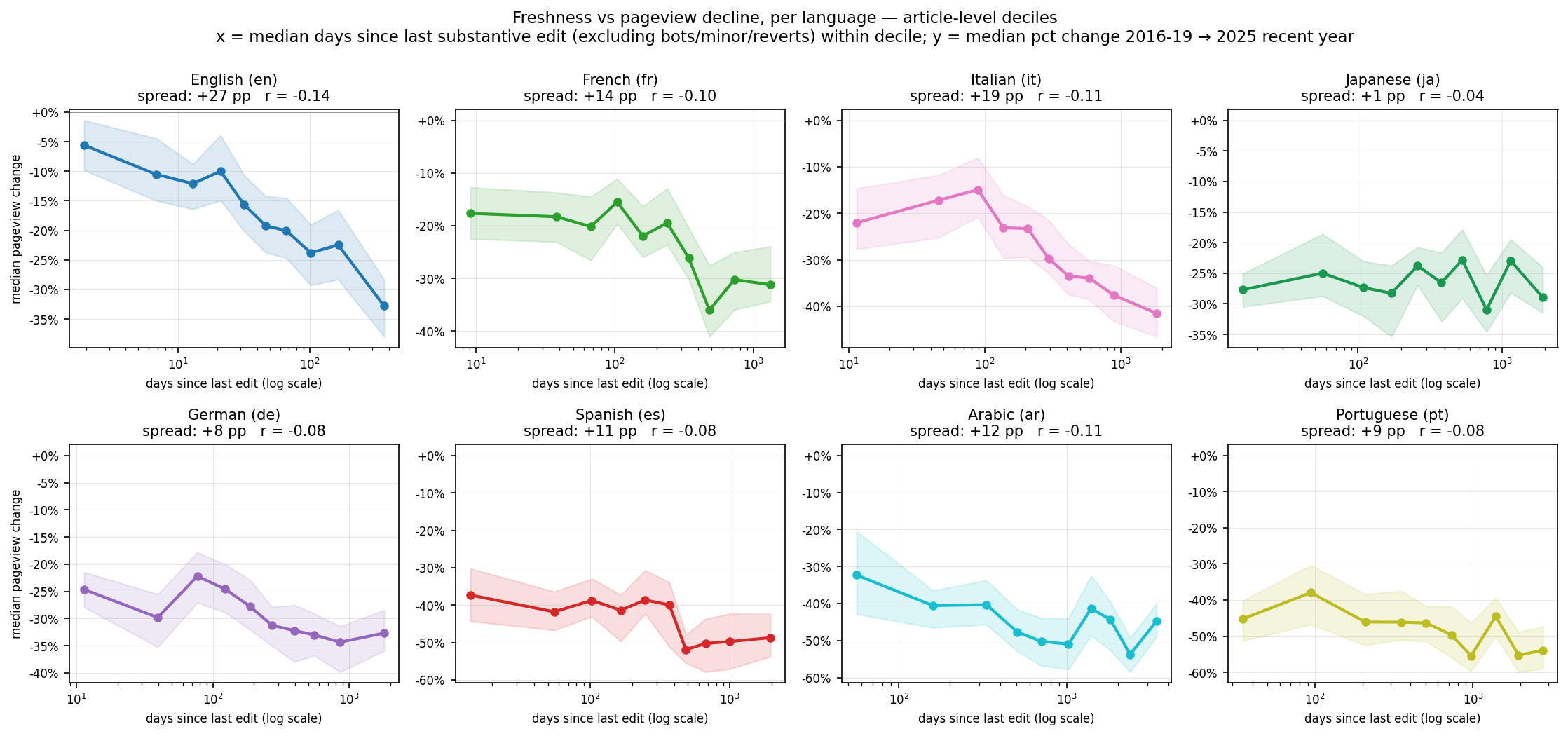

So: looking at each language’s articles by how recently each one was substantively edited (excluding bot edits, minor edits, and reverted vandalism — just real human content edits), do the fresh articles hold up while the stale ones fall harder? Put differently: does active editing predict traffic retention?

TLDR: this is messy to tease out. At least for the best and worst articles, the answer is “for some languages, yes. For others, barely.”

The chart shows the decline between each language’s freshest-10% of articles and its stalest-10%. Some key observations:

English has the strongest effect by a wide margin. The freshest-10% of English Vital Articles declined only about 7%; the stalest-10% declined 34%. In other words, the least-fresh articles do 24% worse than the freshest. Italian (−19 pp), and French (−14%) show more moderate gaps.

Japanese is an outlier: a 1-percentage-point spread. In Japanese, the freshly-edited articles lost traffic at essentially the same rate as articles that hadn’t been substantively touched in years. I don’t have a good explanation for this, and I’d be curious whether anyone closer to Japanese Wikipedia’s editorial culture has one. (Portuguese and Spanish also eyeball on the graph as flatter, but that’s in large part because all their traffic is so far down.)

This is important but not the biggest factor. Probably more detail on this later, but: a pooled multi-variate regression across all languages — controlling for topic, article length, editor count, quality, and article age — confirms that article staleness predicts decline in a real but modest way: staleness explains a slice of within-language variance, not a majority of it. The bigger variance-explainers are still topic and language.

Freshness slows the bleeding but doesn’t stop it. Even the freshest articles in every language lost traffic between 2016–19 and now. Active maintenance bends the curve — it doesn’t reverse it. English editors keeping articles fresh have protected some traffic; English Wikipedia still lost 15% overall.

#Open questions for further investigation

Preliminary multi-variate analysis was not very helpful. In other words, looking at all the data I could easily pull, no factors beyond these three (topic, language, freshness) had a ton of impact. But my stats are very rusty and I’d like to polish that more, and perhaps add in more data sources, before I publish that. If you have suggestions, let me know.